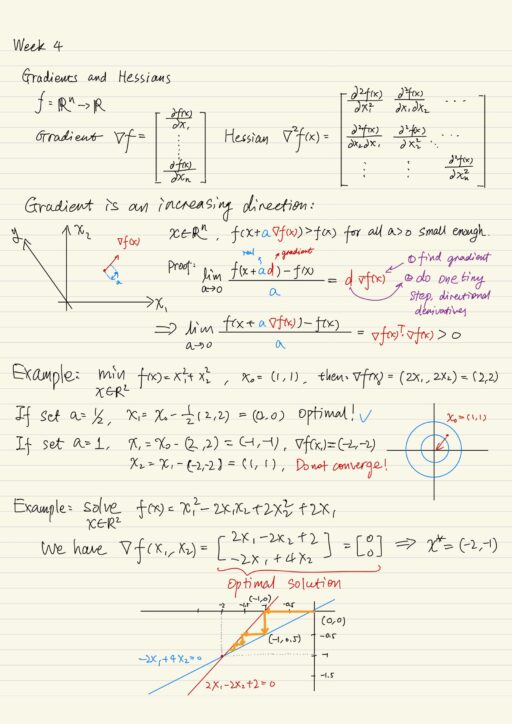

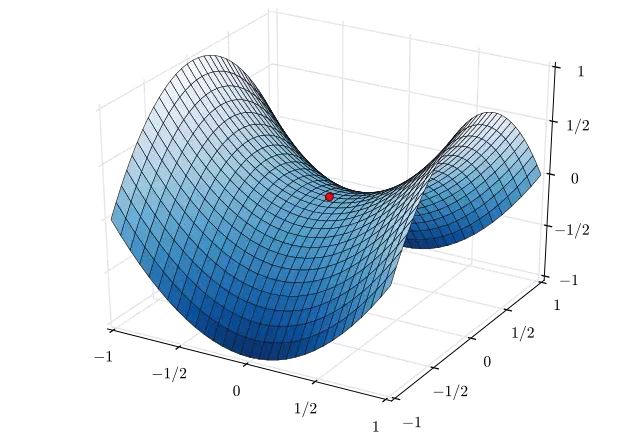

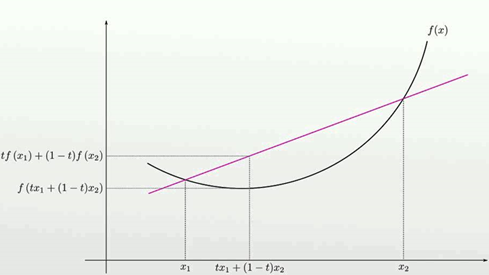

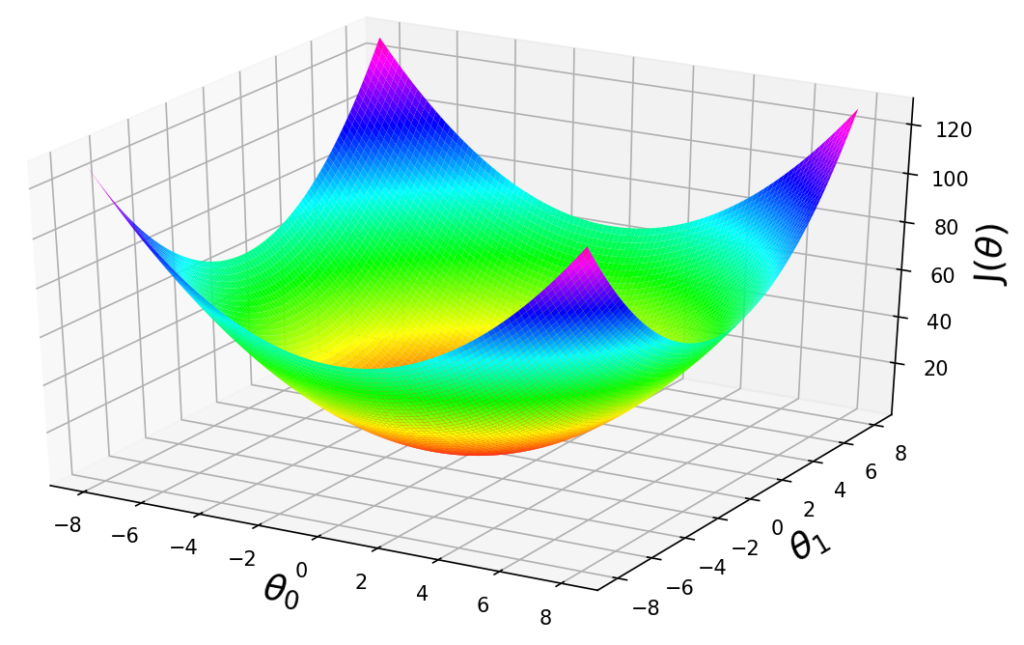

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

Por um escritor misterioso

Last updated 24 fevereiro 2025

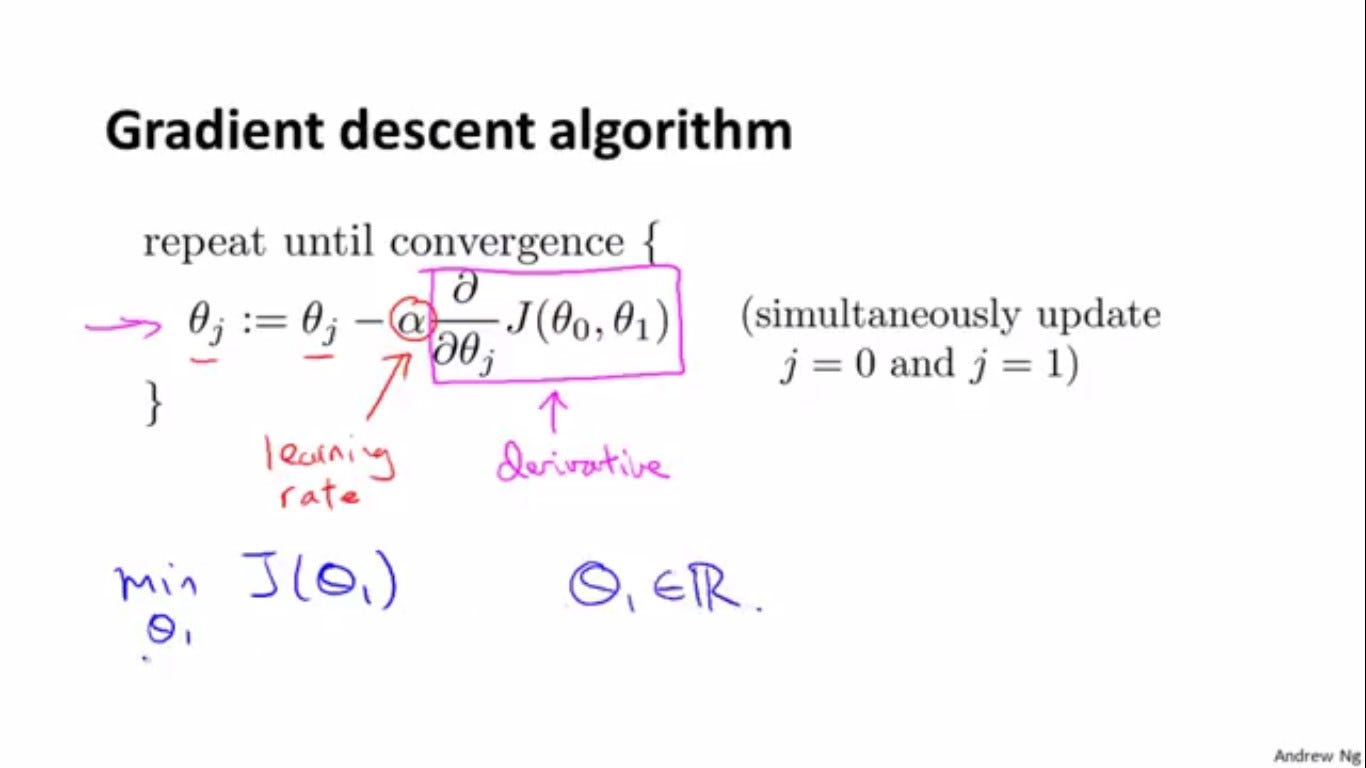

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

Gradient Descent Algorithm-Chain Rule-Directional Derivative, by Kamil Budagov

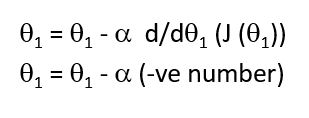

Gradient Descent Algorithm

Is there a mathematical proof of why the gradient descent algorithm always converges to the global/ local minimum if the learning rate is small enough? - Quora

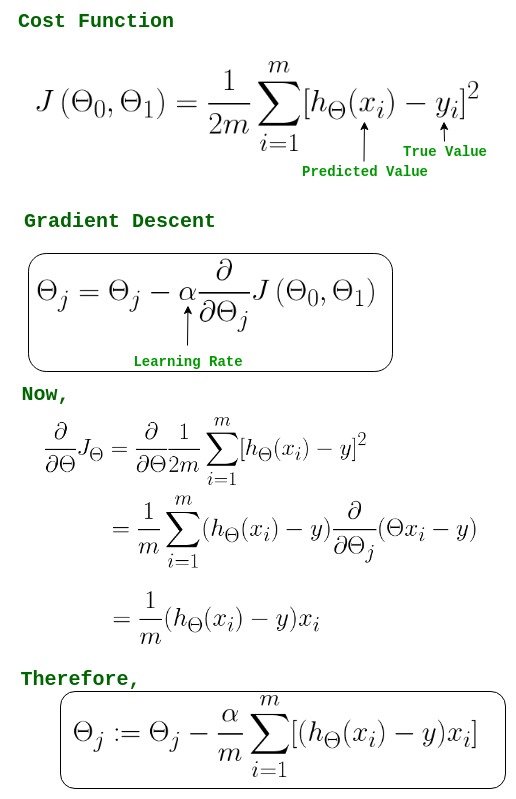

Gradient Descent in Linear Regression - GeeksforGeeks

Gradient descent optimization algorithm.

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

Gradient Descent For Mutivariate Linear Regression - Stack Overflow

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

Gradient Descent Algorithm in Machine Learning - GeeksforGeeks

Gradient Descent Algorithm

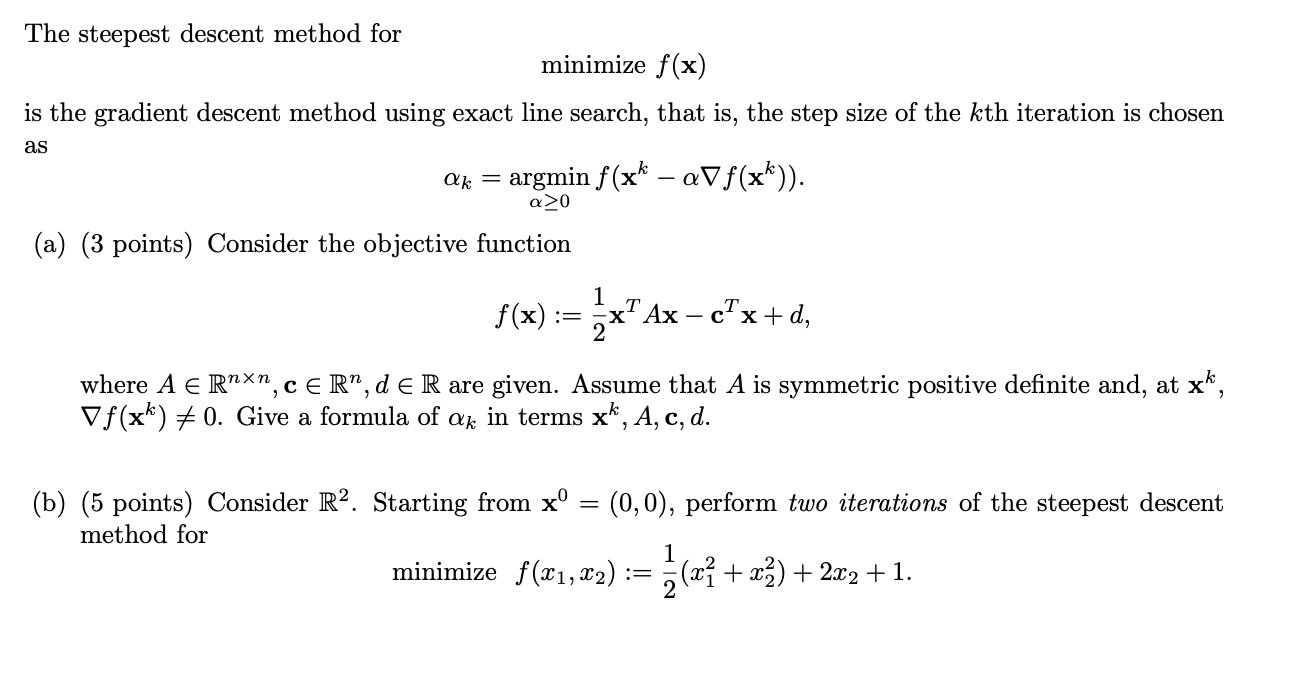

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://d3i71xaburhd42.cloudfront.net/a0174a41c7d682aeb1d7e7fa1fbd2404e037a638/11-Figure8.1-1.png)